Index your codebase. AI searches instead of re-reading files.

94% token savings, reproducibly benchmarked.

Website · Guide · Benchmark · GitHub

Python 3.11+ · macOS · Linux · Windows

One command. Auto-detects your editor. Zero cloud, zero config.

| Use case | How CCE helps | |

|---|---|---|

| 💰 | Reduce Claude Code costs | 94% fewer input tokens per session |

| 🔒 | Keep code private | Everything local, no cloud indexing |

| 🔄 | Multi-editor teams | One index across Claude Code, Cursor, VS Code, Gemini CLI |

| 🧠 | Cross-session memory | Decisions and context survive restarts |

| ⚡ | Faster responses | Less context = faster Claude replies |

| 📊 | Track actual savings | Dollar amounts, not estimates |

uv tool install code-context-engine

cd /path/to/your/project

cce initThat's it. Claude now searches your index instead of reading entire files. No config needed.

- Python 3.11+ (tested on 3.11, 3.12, 3.13)

- A C compiler and

cmake(needed to build tree-sitter grammars)

| Platform | Setup |

|---|---|

| macOS | xcode-select --install (provides compiler and cmake) |

| Ubuntu/Debian | sudo apt install build-essential cmake |

| Fedora/RHEL | sudo dnf install gcc gcc-c++ cmake |

| Windows | Install Visual Studio Build Tools (C++ workload) and CMake |

Tested on all three platforms in CI (macOS, Linux, Windows × Python 3.11/3.12/3.13).

uv tool install code-context-engine # or: pipx install code-context-engine

cd /path/to/your/project

cce init # index, install hooks, register MCP serverRestart your editor. Done. Every question now hits the index instead of re-reading files.

cce init auto-detects your editor and writes the right config:

| Editor | Config written | Instructions |

|---|---|---|

| Claude Code | .mcp.json |

CLAUDE.md |

| VS Code / Copilot | .vscode/mcp.json |

|

| Cursor | .cursor/mcp.json |

.cursorrules |

| Gemini CLI | .gemini/settings.json |

GEMINI.md |

| OpenAI Codex | ~/.codex/config.toml (user-global, per-project section) |

|

| OpenCode | opencode.json |

Multiple editors in the same project? All get configured in one command.

Codex note: Codex CLI reads MCP servers from ~/.codex/config.toml only — it has no per-project config. cce init adds one [mcp_servers.cce-<project>-<hash>] section per project so multiple projects coexist; cce uninstall removes only the section for the current project.

my-project · 38 queries

⛁ ⛁ ⛁ ⛶ ⛶ ⛶ ⛶ ⛶ ⛶ ⛶ 94% tokens saved

Without CCE 48.0k tokens $0.14

With CCE 3.4k tokens $0.01

──────────────────────────────────────────

Saved 44.6k tokens $0.13

Cost estimate based on Sonnet input pricing ($3/1M tokens)

Input tokens are 85-95% of your Claude Code bill. CCE cuts them by 94% (benchmarked on FastAPI).

Without CCE: Claude reads payments.py + shipping.py = 45,000 tokens

With CCE: context_search "payment flow" = 800 tokens

| Without CCE | With CCE | |

|---|---|---|

| Session startup | Re-reads files every time | Queries the index |

| Finding a function | Read entire 800-line file | Get the 40-line function |

| Cross-session memory | None | Decisions + code areas persisted |

| Token cost (Sonnet, medium project) | ~$0.14/session | ~$0.04/session |

We benchmarked CCE against FastAPI (53 source files, 180K tokens) with 20 real coding questions. No cherry-picking, no synthetic queries.

Methodology: For each query, "without CCE" means reading the full content of every file the query touches. "With CCE" means the relevant chunks after compression. This is conservative (agents often read more files than needed).

| Metric | Result |

|---|---|

| Retrieval savings | 94% (83,681 → 4,927 tokens/query) |

| Compression (additional, on retrieved chunks) | 89% (4,927 → 523 tokens/query) |

| Recall@10 (found the right files) | 0.90 |

| Latency p50 | 0.4ms |

| Queries tested | 20 |

| Layer | What it does | Savings | Method |

|---|---|---|---|

| Retrieval | Full files → relevant code chunks | 94% | measured |

| Chunk Compression | Raw chunks → signatures + docstrings | 89% | measured |

| Grammar | Drops articles/fillers from memory text | 13% | measured |

Output compression (reducing Claude's reply length) provides additional savings (~65% estimated) but is not included in the headline number above.

| Repo | Language | Files | Retrieval savings | Recall@10 |

|---|---|---|---|---|

| FastAPI | Python | 53 | 94% | 0.90 |

| chi | Go | 94 | 76% | 0.67 |

| fiber | Go (monorepo) | 396 | 93% | 0.07 |

Go's shorter files reduce the retrieval headroom (smaller baseline). Monorepos dilute recall at top-10 (fiber). Middleware queries with one-feature-per-file hit R=1.00 consistently.

Reproduce it yourself:

pip install code-context-engine

python benchmarks/run_benchmark.py --repo https://github.com/fastapi/fastapi.git --source-dir fastapi

python benchmarks/run_benchmark.py --repo https://github.com/go-chi/chi.git --source-dir .Full results in benchmarks/results/. Queries and methodology in benchmarks/.

9 MCP tools that Claude uses automatically:

| Tool | What it does |

|---|---|

context_search |

Hybrid vector + BM25 search with graph expansion |

expand_chunk |

Full source for a compressed result |

related_context |

Find code via graph edges (calls, imports) |

session_recall |

Recall decisions from past sessions |

record_decision |

Save a decision for future sessions |

record_code_area |

Record which files were worked in |

index_status |

Check index freshness |

reindex |

Re-index a file or the full project |

set_output_compression |

Adjust response verbosity (off / lite / standard / max) |

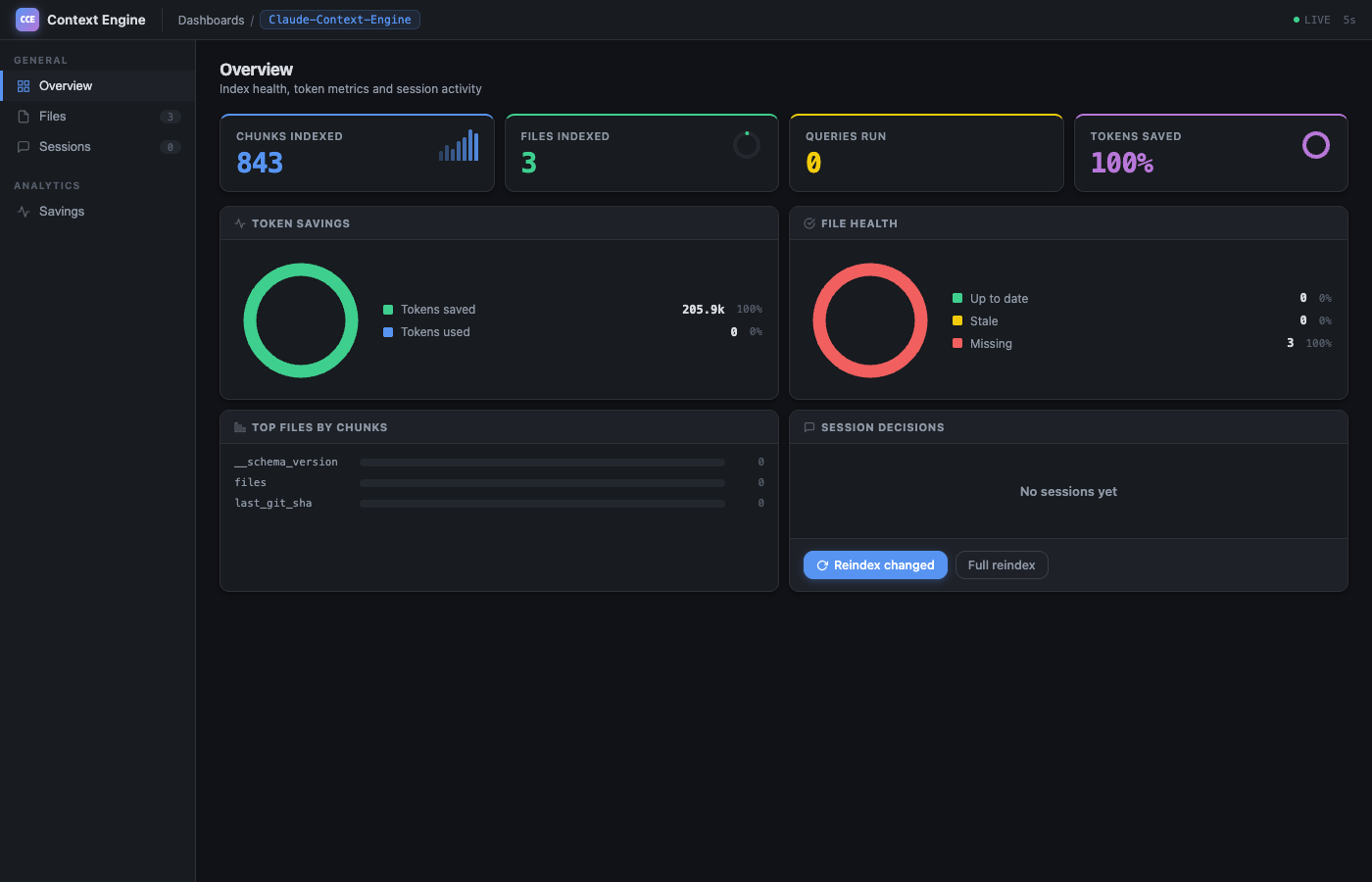

Live dashboard with donut charts, file health, and session history:

cce dashboardDollar estimates fetched from live Anthropic pricing:

cce savings --all # see savings across all projectsCCE is editor-agnostic, local-first, and gives you measurable token savings. Your code never leaves your machine. Unlike built-in indexing (Cursor, Continue), CCE works across Claude Code, VS Code, Cursor, Gemini CLI, and Codex with a single index. Unlike cloud tools (Greptile), it's free and private.

See the full comparison with alternatives for an honest look at trade-offs.

- Index: Tree-sitter parses your code into semantic chunks (functions, classes, modules). Stored as vector embeddings locally.

- Search: Claude calls

context_search. Hybrid vector + BM25 retrieval finds the right chunks. Code graph adds related files automatically. - Compress: Chunks are truncated to signatures + docstrings (or LLM-summarized if Ollama is running).

- Remember: Decisions and code areas persist across sessions via

session_recall. - Track: Every query is logged.

cce savingsshows exactly how much you saved.

Re-indexing after edits takes under 1 second (96% embedding cache hit rate). Git hooks keep the index current automatically.

Output compression tools (like Caveman) save 20-75% on output tokens. Output is 5-15% of your bill. Net savings: ~11%.

CCE saves on input tokens (94% retrieval savings on FastAPI, reproducibly benchmarked). Input is 85-95% of your bill.

Not a text search. Tree-sitter AST parsing creates semantic chunks. Hybrid retrieval merges vector similarity with BM25 keyword matching via Reciprocal Rank Fusion. A confidence scorer blends similarity (50%), keyword match (30%), and recency (20%). Graph expansion walks CALLS/IMPORTS edges to pull in related code.

record_decision("use JWT for auth", reason="session tokens flagged by legal") is stored in SQLite and surfaces via session_recall in the next session. No re-explaining your architecture.

Not estimates. Actual tokens served vs full-file baseline, broken down by buckets (retrieval, compression, output, memory, grammar). Dollar costs fetched from Anthropic's pricing page. Savings summary shown at every session start.

Secret files (.env, *.pem, credentials.json) are never indexed. Content is scanned for AWS keys, GitHub tokens, Slack tokens, Stripe keys, JWTs, and generic credentials. PII (emails, IPs, SSNs, credit cards) is scrubbed from memory writes. All MCP file paths are validated against path traversal.

Content-Hash Embedding Cache

SHA-256 fingerprint per chunk, salted with model name. Re-index skips unchanged code. Binary float32 storage (10x smaller than JSON). Typical re-index: 96% cache hit, under 1 second.

sqlite-vec: 2 MB instead of 217 MB

Replaced LanceDB with sqlite-vec. Same cosine-distance quality, 99% smaller install. WAL mode + PRAGMA NORMAL for 80% write speedup. Vectors, FTS5, code graph, and compression cache all in three SQLite files.

Deterministic Grammar Compression

Memory entries compressed without LLM calls. Drops articles, fillers, pronouns. Three levels (lite/full/ultra, 20-60% savings). Code, paths, URLs preserved byte-for-byte. Same input always yields same output.

Fail-Closed Hook Design

5 Claude Code lifecycle hooks capture session context. Every hook runs curl ... || true, so a crashed server never blocks the user. SessionStart injects bootstrap context; others capture silently.

Dynamic Pricing

Dollar estimates in cce savings come from live Anthropic pricing (HTML table parsed, cached 7 days, offline fallback). No manual updates when rates change.

Append-Only Savings Ledger

7 buckets track every token saved: retrieval, chunk compression, output compression, memory recall, grammar, turn summarization, progressive disclosure. Survives restarts. Powers CLI and dashboard analytics.

cce init # Index + install hooks + register MCP

cce # Status banner

cce savings # Token savings with dollar estimates

cce savings --all # All projects

cce dashboard # Web dashboard with live charts

cce search "auth flow" # Test a query

cce status # Index health + config

cce services # Ollama + dashboard + MCP status

cce commands add-rule '...' # Project rules for Claude

cce uninstall # Clean removal of all CCE artifactsRun cce list for the full command reference.

Zero-config by default. Override what you need in ~/.cce/config.yaml or .context-engine.yaml:

compression:

level: standard # minimal | standard | full

output: standard # off | lite | standard | max

ollama_url: http://localhost:11434 # point at a remote Ollama if desired

retrieval:

top_k: 20

confidence_threshold: 0.5

pricing:

model: sonnet # sonnet | opus | haikuRemote Ollama: If you run Ollama on another machine in your network, set compression.ollama_url (e.g. http://nas.local:11434) or export CCE_OLLAMA_URL — the env var wins. CCE probes the endpoint and falls back to truncation-only compression when it's unreachable, so a flaky link won't break indexing.

CCE also compresses Claude's responses (same concept as Caveman):

| Level | Style | Savings |

|---|---|---|

off |

Full output | 0% |

lite |

No filler or hedging | ~30% |

standard |

Fragments, drop articles | ~65% |

max |

Telegraphic | ~75% |

Tell Claude: "switch to max compression" or "turn off compression". Code blocks and commands are never compressed.

| Component | Size |

|---|---|

| Installed package | ~189 MB (ONNX Runtime is 66 MB of that) |

| Embedding model (one-time download) | ~60 MB |

| Index per project (small/medium/large) | 5-60 MB |

No GPU required. Embedding model runs on CPU via ONNX Runtime.

AST-aware chunking (tree-sitter parsed, 10 extensions):

| Language | Extensions |

|---|---|

| Python | .py |

| JavaScript | .js, .jsx |

| TypeScript | .ts, .tsx |

| PHP | .php |

| Go | .go |

| Rust | .rs |

| Java | .java |

Language-aware fallback chunking (40+ extensions):

| Category | Languages |

|---|---|

| Web | HTML, CSS, SCSS, LESS, Vue, Svelte |

| Systems | C, C++, C#, Zig, Nim |

| Mobile | Swift, Kotlin, Dart |

| Functional | Haskell, Scala, Clojure, Elixir, Erlang, F# |

| Scripting | Ruby, Perl, Lua, R, Bash/Zsh |

| Data/Config | JSON, YAML, TOML, XML, SQL, GraphQL, Protobuf |

| DevOps | Terraform, HCL, Dockerfile |

| Docs | Markdown |

All other text files are chunked by line range. Binary files are skipped.

| Page | Content |

|---|---|

| What is CCE? (Complete Guide) | Setup, tools, how it works, FAQ |

| How to Save Claude Code Tokens | Cost breakdown and savings guide |

| Benchmark Deep Dive | Full FastAPI benchmark methodology |

| Comparison with Alternatives | CCE vs Cursor, Aider, Continue, Greptile |

| Examples | Real conversations with Claude |

| How It Works | Full 9-stage pipeline |

| CLI Reference | Every command with output |

| Configuration | All config options |

- Multi-repo benchmarks (FastAPI, chi, fiber)

- More benchmarks (Django, Express)

- Tree-sitter support for C, C++, Ruby, Swift, Kotlin

- Docker support for remote mode

See CHANGELOG.md for shipped features.

Contributions welcome. See https://github.com/elara-labs/code-context-engine/blob/main/CONTRIBUTING.md for setup.

MIT. See LICENSE.

Claude Code · MCP · sqlite-vec · Tree-sitter · fastembed · Ollama

If CCE saves you tokens, give it a star.